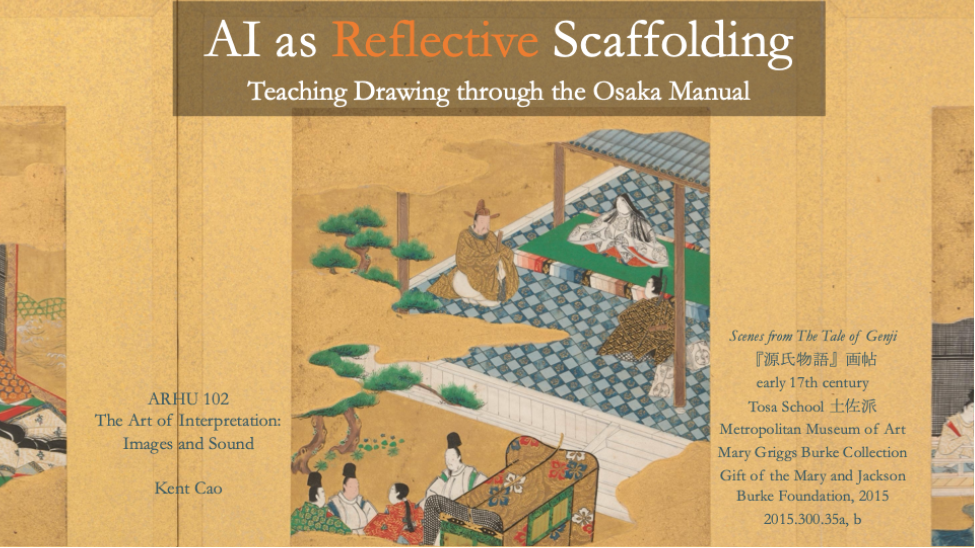

AI as Reflective Scaffolding: Teaching Drawing through the Osaka Manual

Course Context

In 2026 Spring I taught ARHU 102: The Art of Interpretation: Images and Sound at Duke Kunshan University, a humanities foundational course that introduces first- and second-year undergraduates to visual and audiovisual literacy. The course is built on Graeme Sullivan’s practice-led research method, which argues that both creative work and creative making are important academic research methods. Rather than traditional academic essays, students produce creative projects: photo essays, zines, essay films, audiovisual essays, alongside critical reflections.

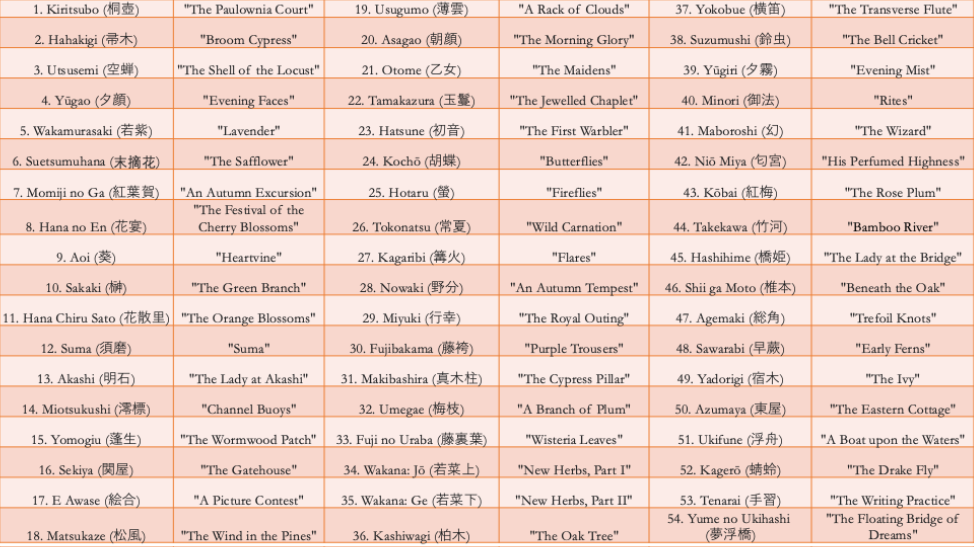

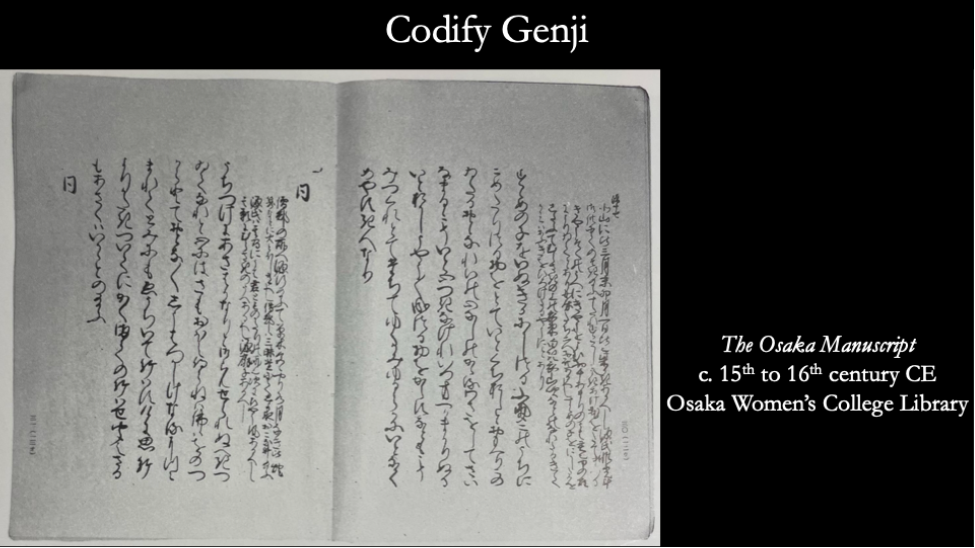

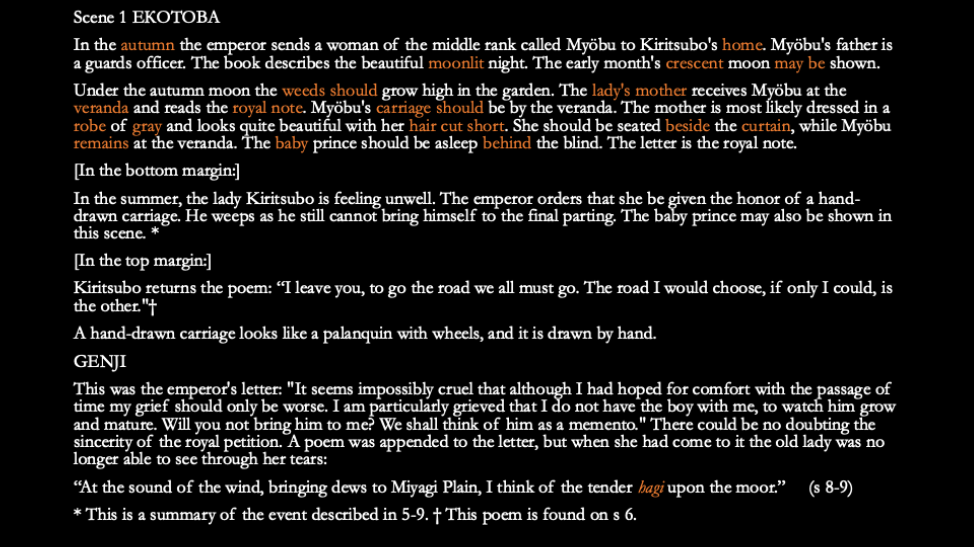

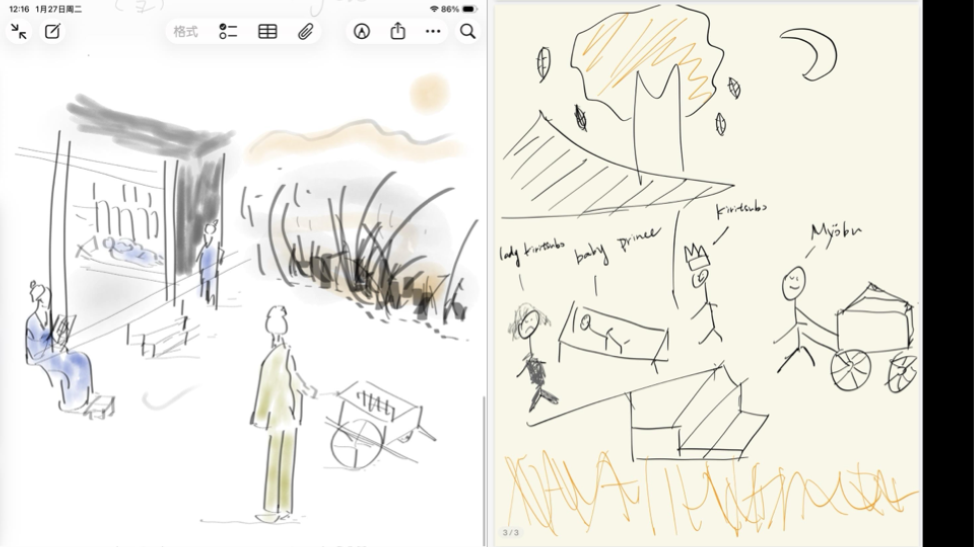

This past semester, one unit focused on The Tale of Genji (early 11th century Japan) and the Osaka Manuscript (c. 15th-16th century), a codified drawing manual that instructed artists how to illustrate the novel’s 54 chapters. The assignment asked students to translate dense textual instructions from the Osaka Manual into their own drawings, then write reflections analyzing the gap between textual instruction, embodied practice, and visual interpretation.

The challenge was not about drawing skills. It was about understanding how premodern Japanese artists worked, what gets lost or gained in translation from text to image, and how hands-on creative practice teaches differently than reading alone.

The Tensions GenAI Raised

As I designed this assignment, several questions surfaced that made me rethink assessment entirely:

- How do I assess experiential learning fairly? Drawing ability varies widely among first- and second-year students. Some have years of training in oil and Chinese ink painting while others might be shy to draw a snowman. I wanted to evaluate problem-solving, translation from text to visual, creative decision-making, critical thinking, and metacognition, not just technical execution.

- How do I ensure genuine reflection? Students can easily outsource writing to AI. How could I make reflection non-delegable while still being intellectually rigorous?

- What is my role if students can just ask ChatGPT for explanations? If AI can interpret the Osaka Manual’s instructions, am I abdicating my teaching responsibility by not explaining everything myself?

- How do I prepare students for a world with AI without either banning it or letting it do their thinking?

The deeper question was: Can AI actually help students think more critically about what embodied practice teaches, rather than replacing that thinking?

What I Tried

I designed a two-part AI scaffolding approach that positioned AI as a tool for making thinking visible, not a replacement for intellectual labor.

Part 1: AI as Comparative Lens

After students completed multiple drawing iterations based on the Osaka Manual‘s instructions, I asked them to prompt an AI (ChatGPT or Gemini) with:

“Explain what this Manual instruction prioritizes in form, movement, and hierarchy.”

Then students had to:

- Compare AI’s interpretation with their own drawing choices

- Identify where AI clarified something they’d missed or misunderstood

- Note where AI flattened cultural complexity or missed visual nuance

- Recognize where their embodied practice contradicted or complicated the written text

This critique of AI became part of their written reflection. The assessment was not “Did you use AI correctly?” but rather “How critically can you read AI’s interpretation against your own lived experience of translating text into image?”

Part 2: AI as Socratic Interlocutor

Rather than having AI write reflections for them, I asked students to prompt:

“Ask me three questions that would help me think more deeply about translating text into drawing.”

Students then responded to at least one AI-generated question in 2-3 substantive sentences. I graded the depth and honesty of their response: evidence of thinking, not polish or eloquence.

This structure meant:

- Drawing remained non-delegable (you cannot outsource manual skill or embodied experience)

- Reflection became dialogic and situated (students had to engage with their own specific work and choices)

- AI use was transparent and pedagogically purposeful (students knew exactly why it was part of the assignment)

- Students learned to critique AI output critically rather than defer to it as an authority

How It Went

The results genuinely surprised me. Students wrote thoughtful, metacognitive reflections. Here are excerpts from actual student work:

“The instruction text contains complicated scenes and lots of characters. When drawing without being familiar to Japanese culture, I did not know what can be omitted and what should be kept. So I tried to keep as many things mentioned as possible. However Japanese painters can wisely choose what to keep that will not influence readers’ understanding.”

This student articulated something crucial: cultural literacy shapes visual interpretation. You need to know what your audience knows in order to decide what to show.

“When I actually picked up the pen and started to draw, I found that my skills limited the presentation. Besides, I realized how many details I didn’t know. For instance, what expressions did the three main characters have? How are their positions arranged? What were the specific interior decorations like in Japan at that time? There is a big gap between the conception in one’s mind and the actual writing.”

Here, the student identified the epistemological gap between knowing and doing. This is a core insight in practice-led research that is often difficult to articulate without first experiencing it.

“I wanted to convey the sorrow and affective state of Lady Kiritsubo. Although I was not successful in drawing out this aspect, I attempted to do so through subtle visual choices, for instance, by drawing her eyebrows slightly downward. Compared to more formal, ritualized scenes, this moment felt more inward and intimate. This may help explain why such scenes are often avoided in visual representations, as courtly artworks prioritize political power rather than emotional vulnerability.”

This reflection demonstrates sophisticated historical thinking. The student moved from technical problem-solving to interpretive analysis, asking why certain scenes appear more frequently in historical visual culture and connecting formal choices to ideological functions.

These reflections were not just describing what students drew. They were articulating the gap between textual knowledge and embodied practice, the cultural assumptions rooted in visual conventions, and the limits of both written instruction and AI interpretation.

Unexpected Discovery: AI as a Pattern-Recognition Tool for Teaching

I also experimented with using AI as a pattern-identification tool for my own pedagogical assessment. After carefully reading all 18 student reflections, I asked AI to generate a searchable index of recurring themes:

“How many students mentioned struggles with visual economy? How many connected their work to broader cultural or historical contexts? What patterns emerge around technical vs. interpretive challenges?”

AI surfaced patterns I had sensed intuitively but could not easily quantify. I still interpreted what those patterns meant pedagogically. But AI made the evidence visible without the cognitive distortion that comes from reading 18 reflections in sequence and unconsciously privileging the most recent or most eloquent. This felt like using a spectrometer in a lab. It does not replace scientific judgment. It instead extends perception. The tool gave me data. I provided humanistic interpretation.

One Thing I'd Tell Colleagues Just Starting to Think About This

Design assignments where the core intellectual labor is non-delegable. Then use AI strategically to make students’ thinking visible, not to bypass it.

When students asked AI instead of asking me directly for explanations, something pedagogically valuable happened. They stopped seeking confirmation and started evaluating. They encountered a confident but imperfect interpretation and had to decide whether it made sense. My role shifted from explainer to interpreter, critic, and guide. Students came to me with better questions because they had already tested their initial ideas against an algorithmic voice that could not account for embodied experience, cultural context, or the productive failures inherent in creative practice.

This approach does not work for every assignment. But for experiential, practice-led learning where the goal is metacognition, process awareness, and critical judgment, AI can function as a dialogic mirror that forces students to articulate and defend their own reasoning. The key is transparency and intentional design. Students knew exactly why AI was part of the assignment, what it could and could not do, and that their critical engagement with it was being assessed. No mystery, no blanket prohibition, no outsourcing. Just a tool used thoughtfully within a carefully designed pedagogical structure that kept non-delegable student thinking at the center.

This is part of the collection of sharing from members of the 2025-26 Faculty Learning Community: Assessment in the Age of GenAI.