AI as a Structured Reading Partner: Enhancing Journal Club Engagement in Cognitive Psychology

What I experimented with

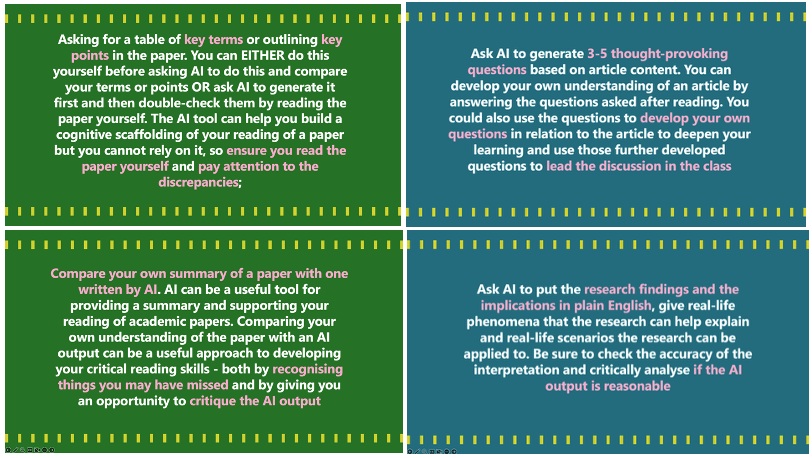

In PSYCH 202 Cognitive Psychology, I experimented with using AI as a structured reading partner in our weekly Journal Club from Week 2 to Week 6. Each week, students read one assigned article shared by me with the whole class and one self-selected article related to the weekly topic. They used an AI chatbot to support four parts of academic reading: (1) identifying key terms or outlining the paper, (2) generating discussion questions, (3) comparing their own summary with an AI-generated summary, and (4) translating the findings into plain language with real-life applications. Before class, students submitted the prompts they used, the AI outputs they received, and a short bullet-point reflection on discrepancies between their own reading and the AI’s response. In class, they shared both the article content and their reflections on the AI-assisted reading process.

What I learnt

Positive aspects:

One positive refection from students was that it lowered the entry barrier to difficult academic readings. Students used AI to clarify key terms, understand the structure of a paper, and make sense of unfamiliar methods. This seemed especially helpful when the article involved complex theoretical arguments, methods, or statistical models.

Some students also used AI in a more interactive way, almost like a tutor. Rather than only asking for a summary, they asked follow-up questions such as “walk me through Experiment 4,” “are you sure they controlled for baseline differences,” or “which phrase shows that?” I found this especially encouraging because it showed that some students were not passively accepting the output. They were learning how to interrogate AI and push it for evidence.

Negative aspects:

There were also clear risks. Some students may have relied too much on AI summaries without enough independent verification. In a few cases, the discrepancy reflections were quite generic, which made me wonder whether the reflections themselves may also have been generated by AI. For example, comments such as “AI was accurate, but my version was more detailed,” “the AI summary was concise but missed nuance,” or “the real-life applications were reasonable but could be deeper” could be genuine, but they are also the kind of vague reflection that AI can easily produce.

Another concern is that AI can give students false confidence. Because AI writes fluently and coherently, students may assume that it has understood the paper. In class, I occasionally saw students share AI-generated information with confidence even when the interpretation was incomplete or inaccurate.

Something unexpected:

One unexpected and interesting observation was that some students compared multiple AI tools. They used models such as free ChatGPT, paid ChatGPT, DKU ChatGPT, Google Gemini, DeepSeek, and Kimi for the same assignment and noticed differences in response style, memory capacity, level of detail, and output quality. This was not something I had explicitly designed into the assignment. I initially asked everyone to use DKU ChatGPT, but soon noticed that many students were using other, sometimes more advanced, tools, or comparing multiple models. This led to an important discussion about equity in class. Because the assignment was graded, some students worried that access to a stronger model could affect the quality of their submission and therefore their grade. At the same time, some models cost more than others, require overseas registration, or need an overseas phone number or account, which not all students can access equally. In this assignment, the discrepancy analysis helped reduce this concern because students were not graded on whether AI produced the “best” answer, but on how thoughtfully they evaluated and revised the output. However, it still raised a concern about fairness when AI is allowed or encouraged in graded assignments, and more generally about unequal access to AI tools in university learning. What I learnt from this experiment is that AI-integrated assignments or AI-assisted learning need to be designed with fairness and access in mind. If students are encouraged or required to use AI, instructors need to consider whether all students have comparable access to the tools, whether the grading criteria reward critical engagement rather than the quality of the AI output itself, and whether a university-supported tool should be used as the baseline option.

Overall, I found it to be a positive experience. The most significant pedagogical value for me was that AI made students’ thinking and learning process more visible. By reading their prompts, AI outputs, and discrepancy analyses, I could see how they approached the paper, where they misunderstood something, what the AI missed, and how their critical judgment developed over time. In a normal journal club, much of this reading process remains hidden.

This is part of the collection of sharing from members of the 2025-26 Faculty Learning Community: Assessment in the Age of GenAI.